For years, debugging followed a fairly reliable pattern inside software teams. Something failed, engineers traced the issue, identified the faulty logic, fixed it, and moved on. Even in large distributed systems, there was usually a clear assumption underneath the process: if enough visibility existed, the root cause could eventually be found.

AI driven applications are quietly changing that assumption.

The difficulty is not that these systems fail more often. In many cases, they do not fail in ways that are immediately visible at all. They continue functioning, APIs respond normally, infrastructure looks healthy, and dashboards remain green. Yet underneath that surface stability, outcomes begin shifting. Recommendations lose relevance, prediction quality weakens, automated decisions become inconsistent, and user trust slowly starts eroding before anyone notices there is a deeper issue.

This is where debugging starts becoming fundamentally different from what most engineering organizations are used to. The challenge is no longer centered around broken functionality. It is centered around detecting subtle behavioral drift inside systems that still appear operational.

The Problem No Longer Lives in One Place

Traditional systems were designed around explicit logic. Developers wrote rules, workflows, and conditions that behaved predictably under defined circumstances. When something went wrong, teams could usually narrow the issue down to a service, dependency, function, or infrastructure bottleneck.

AI systems operate in a completely different way because behavior is learned rather than directly programmed.

That shift changes the nature of debugging itself. A problematic output may not come from a single technical flaw. It could emerge from several smaller influences interacting together:

- changes in incoming data

- shifting user behavior

- feature weighting adjustments

- model retraining differences

- evolving production environments

What makes this difficult is that each factor may appear harmless individually. The problem only becomes visible when those factors combine over time and gradually alter system behavior.

As a result, engineering teams spend less time searching for isolated failures and more time trying to understand why the system is behaving differently from before. That distinction changes the entire debugging process because the issue is no longer static. It evolves alongside the environment around it.

Visibility Starts Breaking Down

Once organizations begin dealing with learned behavior instead of fixed logic, another challenge quickly appears: limited explainability.

Many AI driven applications can produce highly accurate outcomes while still offering very little visibility into how decisions are being made internally. Teams can observe outputs with precision, but understanding why a specific outcome occurred is often far more complicated. This creates a frustrating gap between observation and interpretation.

In traditional software environments, engineers could inspect execution paths and understand system reasoning step by step. With modern AI architectures, especially large neural systems, decision pathways are often distributed across layers that are difficult to interpret directly.

The impact of this problem extends beyond engineering teams. Limited explainability affects:

- operational trust

- regulatory confidence

- compliance requirements

- customer accountability

- executive oversight

An organization may know that a model is underperforming without fully understanding why it is underperforming. That uncertainty creates hesitation, slower decision-making, and growing operational risk, especially in environments where AI outputs influence customer experiences or business-critical decisions.

Data Is Quietly Becoming the Real System Logic

One of the biggest operational shifts happening inside AI environments is the changing role of data.

In traditional software, data supported the application. In AI systems, data actively shapes application behavior. That difference is more important than many organizations initially realize.

A recommendation model trained six months ago may continue running perfectly from a technical perspective while becoming progressively less effective because user behavior has changed. Fraud detection systems may slowly lose sensitivity as attack patterns evolve. Customer support automation may begin generating weaker responses simply because the incoming interaction patterns no longer resemble the data used during training. None of these failures necessarily originate from bad code.

They emerge because the environment surrounding the model changes continuously while the assumptions inside the model remain static. This creates a debugging challenge that looks very different from traditional software troubleshooting. Teams now have to monitor not only application health, but also whether the system’s understanding of reality is still accurate.

That introduces an entirely new operational layer where engineering and data quality become tightly connected.

Stability Can No Longer Be Assumed

One of the foundations of traditional debugging was reproducibility. If teams could recreate an issue consistently, they could usually isolate it, validate fixes, and restore confidence.

AI driven applications weaken that assumption significantly.

The same system may produce slightly different outcomes over time even without major deployment changes. Retraining cycles, probabilistic inference behavior, evolving datasets, and infrastructure updates all introduce variability into production environments.

This creates situations where:

- issues appear intermittently

- outputs drift gradually instead of failing instantly

- performance degradation becomes difficult to measure early

- validation cycles take longer because consistency is harder to guarantee

What makes this particularly challenging is that many AI failures operate below visibility thresholds for long periods. Systems continue functioning well enough to avoid immediate alarms while slowly creating downstream business impact.

By the time the issue becomes obvious externally, organizations are often dealing with accumulated operational damage rather than a contained technical problem.

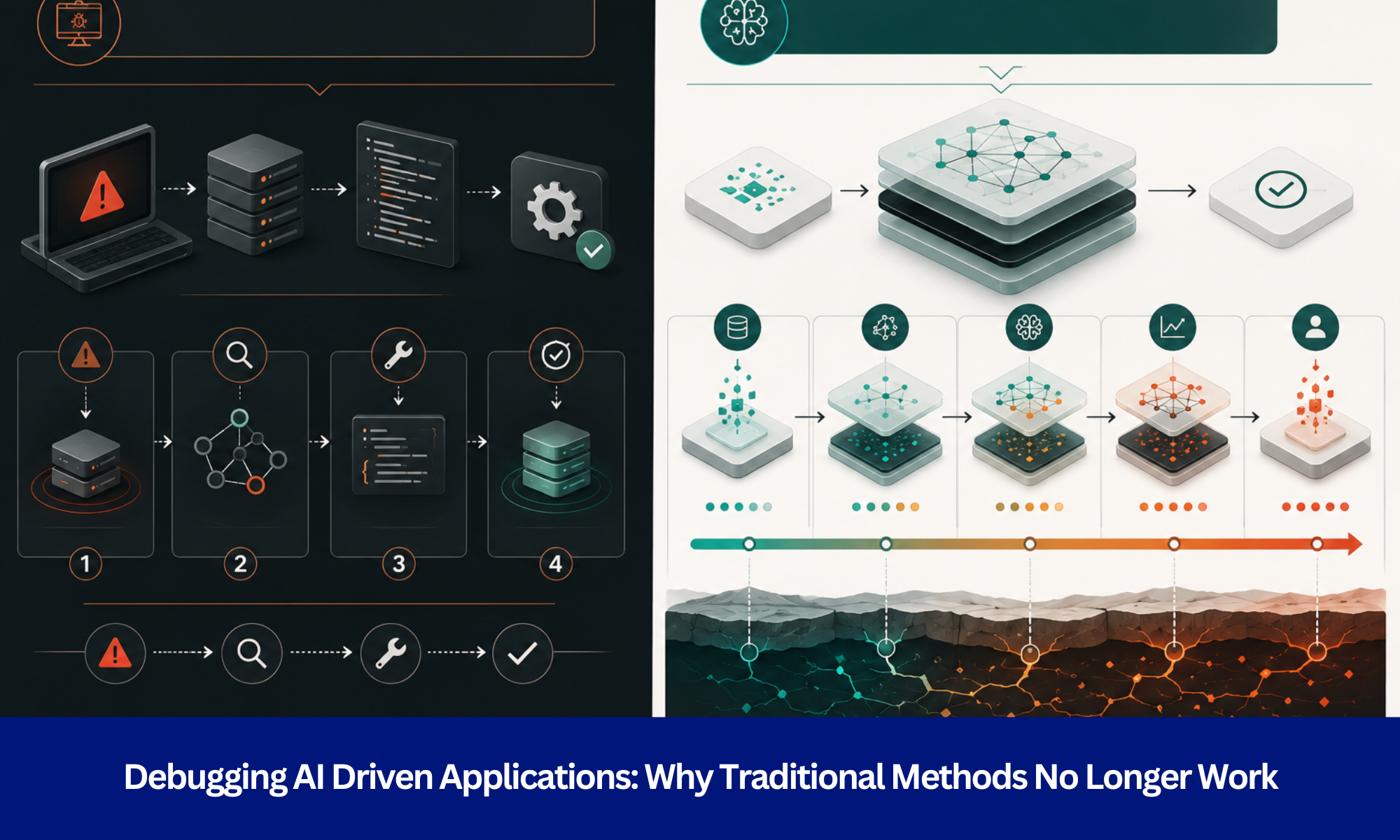

Debugging Is Turning Into Continuous Oversight

These changes are forcing organizations to rethink debugging as an ongoing operational discipline rather than a reactive engineering activity.

Traditional debugging followed a linear cycle:

- detect failure

- identify cause

- apply fix

- validate stability

AI systems rarely behave in such a contained way because their environments never stop changing.

As a result, modern AI operations depend heavily on continuous observation. Teams increasingly need visibility into:

- prediction consistency

- confidence fluctuations

- data drift patterns

- behavioral anomalies

- long-term model performance trends

This is not simply a tooling upgrade. It is a shift in operational mindset.

Organizations are moving from maintaining static systems to supervising adaptive systems that continuously evolve after deployment. That distinction changes how reliability itself is defined. Reliability no longer means “the system works.” It increasingly means “the system continues behaving within acceptable boundaries over time.”

The Organizational Impact Is Bigger Than Most Teams Expect

Many companies initially treat AI debugging as a technical scaling issue. Over time, they realize the challenge affects organizational structure just as much as engineering workflows.

The reason is simple. AI reliability depends on coordination across multiple domains simultaneously. Engineering teams manage infrastructure and deployment. Data teams manage training quality and feature pipelines. Operations teams monitor production behavior. Leadership teams increasingly become responsible for governance, accountability, and risk visibility. Those responsibilities overlap constantly.

This changes the nature of ownership inside technology organizations because no single team fully controls system behavior anymore. Managing AI driven applications becomes less about isolated technical expertise and more about coordinated operational awareness across the organization.

The companies adapting successfully are usually the ones that stop treating AI oversight as a support function and start treating it as a long-term operational capability.

The Companies That Win Will Not Be the Ones With the Most AI

A few years ago, competitive advantage in AI was largely tied to adoption speed. Organizations focused on building models faster, automating aggressively, and expanding AI capabilities across products and operations.

That mindset is beginning to change.

As AI systems become more deeply integrated into business operations, long-term advantage increasingly comes from control, observability, and reliability rather than raw deployment volume.

Organizations that can understand how their systems evolve over time will operate with significantly greater confidence. They will detect performance shifts earlier, reduce operational surprises, and make decisions faster because visibility into system behavior remains stronger.

In practical terms, this means the future advantage may not belong to the companies deploying the most AI. It will likely belong to the companies that understand their AI systems well enough to manage them continuously without losing trust in the process.

Build AI Systems That Stay Reliable as They Evolve

AI driven applications require a very different operational mindset because the systems themselves behave differently after deployment. Traditional debugging approaches were designed for static logic, predictable execution paths, and clearly visible failures. Modern AI environments no longer operate within those assumptions.

Evermethod Inc helps organizations build AI systems with stronger observability, long-term reliability, and continuous operational control so teams can move beyond reactive troubleshooting and manage evolving systems with confidence.

Get the latest!

Get actionable strategies to empower your business and market domination

.png?width=882&height=158&name=882x158%20(1).png)

.png/preview.png?t=1721195409615)

%2013.png)