Artificial intelligence is no longer an emerging concept in healthcare. It is actively assisting radiologists in image interpretation, predicting patient deterioration in intensive care units, supporting early disease detection, and optimizing hospital resource planning. Health systems are integrating AI into clinical decision support, revenue cycle management, and population health analytics.

Adoption is accelerating. Expectations are rising. Yet one concern consistently shapes executive and clinical discussions:

Can we rely on decisions we cannot clearly explain?

Many high-performing AI systems generate accurate predictions but offer limited insight into how those predictions are formed. In healthcare, this opacity introduces risk. Clinical decisions require accountability. Regulatory environments demand documentation. Patients expect transparency.

Accuracy alone does not establish trust.

Explainable AI, commonly referred to as Explainable AI, addresses this challenge. It enables healthcare organizations to move from experimental AI deployment to institutional confidence.

The Black Box Problem in Clinical Environments

Modern AI models, particularly deep neural networks, excel at recognizing patterns across complex and high-dimensional datasets. In radiology, they identify subtle imaging features. In predictive analytics, they estimate probabilities of sepsis, readmission, or cardiac events. In operational systems, they forecast staffing needs and patient flow.

However, many of these models operate as statistical abstractions. They process inputs and produce outputs without exposing the reasoning pathway in a human-readable form.

In healthcare, this creates several structural challenges:

- Clinicians must justify treatment decisions.

- Hospitals must defend care standards.

- Regulators require auditability.

- Patients deserve informed explanations.

If an AI system flags a patient as high risk but cannot clarify why, clinicians are forced into a difficult position. They must either trust an opaque output or override it based on experience. Neither scenario supports scalable AI adoption.

The issue is not whether AI can be accurate. The issue is whether it can be accountable.

Healthcare systems are built around traceability. Every prescription, diagnostic step, and intervention is documented. AI systems must operate within that same discipline.

What Explainable AI Means in Healthcare Practice

Explainable AI refers to systems that provide understandable reasoning for their predictions or recommendations. In healthcare, that understanding must align with clinical logic and operational workflows.

Effective Explainable AI in medical environments typically includes:

Feature Attribution

The ability to identify which patient variables influenced a prediction. For example, a risk model should clarify whether elevated white blood cell count, blood pressure trends, or comorbidities drove a sepsis alert.

Confidence and Uncertainty Indicators

Clinicians need to know how reliable a prediction is. A probability score without context may be misleading.

Decision Traceability

Healthcare organizations require logs showing how and when a decision was generated, especially in high-risk environments.

Scenario Sensitivity

Understanding how slight changes in inputs would alter an outcome supports clinical reasoning.

Importantly, explainability must be role-specific:

- Data scientists need model-level transparency.

- Clinicians require concise and clinically meaningful explanations.

- Administrators need governance visibility.

- Compliance teams require documentation and audit trails.

If explanations are overly technical, they fail to support frontline adoption. If they are overly simplified, they fail governance requirements. Designing balanced explainability is a strategic task.

Practical Applications Where Explainable AI Delivers Measurable Value

Explainability becomes most powerful when embedded in real clinical workflows.

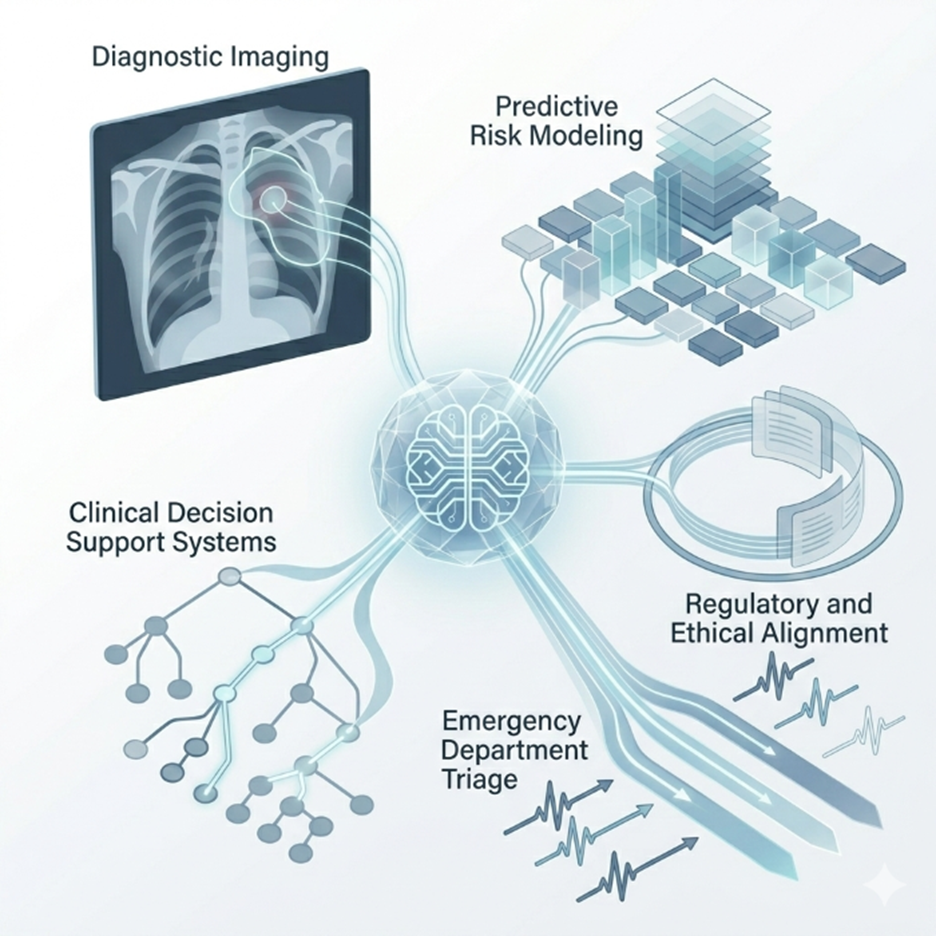

Diagnostic Imaging

AI-assisted imaging tools can highlight specific areas of a scan that influenced a diagnosis. This visual clarity allows radiologists to verify findings rather than passively accept them.

In practice, this improves collaboration between human expertise and algorithmic analysis. Radiologists remain in control, but AI provides structured support. The transparency reduces hesitation and strengthens validation processes.

Predictive Risk Modeling

Hospitals increasingly use predictive analytics to identify patients at risk of deterioration, readmission, or complications.

Without explainability, a high-risk label may trigger unnecessary interventions or be dismissed entirely. With explainability, care teams can see which clinical indicators contributed to the classification. This allows targeted action rather than generalized escalation.

Risk models become more than statistical signals. They become actionable insights.

Clinical Decision Support Systems

Medication recommendations, treatment pathways, and dosage adjustments require careful justification.

An explainable system clarifies:

- Which patient characteristics influenced the recommendation

- Whether established clinical guidelines were considered

- How patient history contributed to the output

This transparency reduces alert fatigue and increases clinician engagement. It shifts AI from a noisy notification system to a credible advisor.

Emergency Department Triage

AI-driven triage systems can help prioritize patients in high-volume settings. However, prioritization decisions must be defensible.

Explainability ensures that triage outputs reflect measurable physiological signals rather than opaque thresholds. In high-pressure environments, clarity reduces risk and supports operational consistency.

Regulatory and Ethical Alignment

Healthcare AI operates within a tightening regulatory landscape. Transparency is increasingly embedded in policy discussions and oversight frameworks.

Explainable AI supports compliance in several ways:

- Maintains documented decision logs

- Enables retrospective review

- Supports fairness and bias audits

- Provides traceable model version history

Bias detection is particularly critical. Historical healthcare data may contain embedded inequities. Without explainability tools, biased outcomes may remain undetected. With structured transparency, organizations can identify patterns across demographic groups and implement corrective strategies.

From a legal standpoint, defensibility matters. If an adverse event occurs, healthcare organizations must demonstrate responsible system design and oversight. Explainability strengthens institutional resilience.

Designing Explainability into System Architecture

Explainability should not be treated as a post-deployment patch. It must be embedded in the design phase.

Key architectural considerations include:

Structured Data Governance

Clear documentation of data sources and preprocessing decisions ensures transparency at the foundation.

Feature Documentation

Every input variable should be traceable and clinically validated.

Model Version Control

Healthcare AI systems evolve. Maintaining version history ensures accountability over time.

Continuous Monitoring

AI systems must be observed in production environments to detect performance shifts or unintended outcomes.

A layered architecture often proves effective:

- Technical transparency for engineering teams

- Clinical interpretability for medical staff

- Executive reporting for governance oversight

This structure aligns operational efficiency with accountability.

Scaling AI Responsibly

Many healthcare organizations begin AI adoption through pilot programs. Early results often focus on performance metrics such as accuracy, recall, or AUC scores.

However, sustainable AI deployment requires broader evaluation criteria:

- Clinical acceptance

- Workflow integration

- Regulatory readiness

- Ongoing monitoring and validation

Explainability influences each of these dimensions.

When clinicians understand why a model produces a recommendation, resistance decreases. When administrators can monitor performance trends, governance strengthens. When regulators can review documentation, institutional confidence grows.

Trust is not an abstract concept. It is operationalized through structured transparency.

The Road Ahead for Explainable Healthcare AI

Looking forward, explainability will likely become a baseline requirement rather than a competitive advantage.

Future developments may include:

- Greater adoption of inherently interpretable models

- Standardized explainability reporting frameworks

- Integrated runtime governance dashboards

- More rigorous bias monitoring protocols

- Formal regulatory mandates around AI transparency

Healthcare will continue integrating AI into core clinical pathways. As autonomy increases, expectations for accountability will also rise.

Organizations that proactively invest in explainability will be better positioned to innovate without compromising safety or compliance.

From Capability to Confidence

AI has the potential to transform healthcare delivery. It can enhance early detection, streamline operations, and improve patient outcomes.

However, transformation requires confidence.

Healthcare institutions cannot depend on opaque systems in high-stakes environments. They require AI solutions that are transparent, traceable, and defensible.

Explainable AI provides the bridge from raw capability to operational trust.

Building Responsible Healthcare AI with Evermethod Inc

Designing and deploying explainable AI in healthcare demands architectural discipline. It requires alignment across data governance, system integration, compliance oversight, and performance monitoring.

Evermethod Inc partners with healthcare organizations to design production-grade AI systems built on structured explainability and responsible autonomy. The focus is long-term stability, measurable outcomes, and regulatory alignment.

If your organization is advancing AI initiatives in clinical or operational domains, this is the moment to embed explainability into your foundation.

Connect with Evermethod Inc to architect healthcare AI systems that deliver innovation with accountability and performance with trust.

Get the latest!

Get actionable strategies to empower your business and market domination

.png?width=882&height=158&name=882x158%20(1).png)

.png/preview.png?t=1721195409615)

%2013.png)