Artificial intelligence systems are evolving beyond single-step predictions. A new class of systems, often referred to as agentic AI, is designed to interpret goals, plan actions, and execute tasks across multiple steps and systems.

This shift expands the range of problems AI can address. Tasks that previously required human coordination, such as managing workflows or interacting with multiple tools, can now be partially automated.

At the same time, deploying these systems in production introduces a different set of engineering considerations. These challenges are not simply extensions of traditional machine learning problems. They emerge from the way agentic systems operate as dynamic, multi-component environments.

Understanding these factors is essential for building systems that are reliable, maintainable, and scalable.

What Makes Agentic AI Different: The Execution Loop Has Changed

The most fundamental difference lies in how these systems execute tasks.

Traditional AI systems follow a linear model:

Input → Model → Output

Each request is processed independently.

Agentic systems follow a continuous loop:

Goal → Plan → Act → Observe → Reflect → Repeat

This allows the system to move toward an objective through iteration rather than producing a single response.

An agent may decompose a task, invoke tools, evaluate results, and revise its approach based on what it observes. These decisions are made during execution and depend on context.

The behavior of the system is therefore shaped by the interaction between planning, execution, memory, and evaluation.

Reliability and Predictability

When agentic systems are deployed in production, one of the first noticeable changes is variability in execution.

Because decisions are made step by step, small differences early in the process can influence later outcomes. In simple workflows, this effect may be limited. In more complex scenarios, it can accumulate across steps.

This leads to a shift in how reliability is defined. The goal is not identical outputs, but consistent outcomes within acceptable boundaries.

In practice, teams introduce structure around execution. Intermediate validation, controlled tool usage, and limits on iteration help ensure that the system behaves within expected parameters.

Reliability becomes a function of how well variability is managed rather than eliminated.

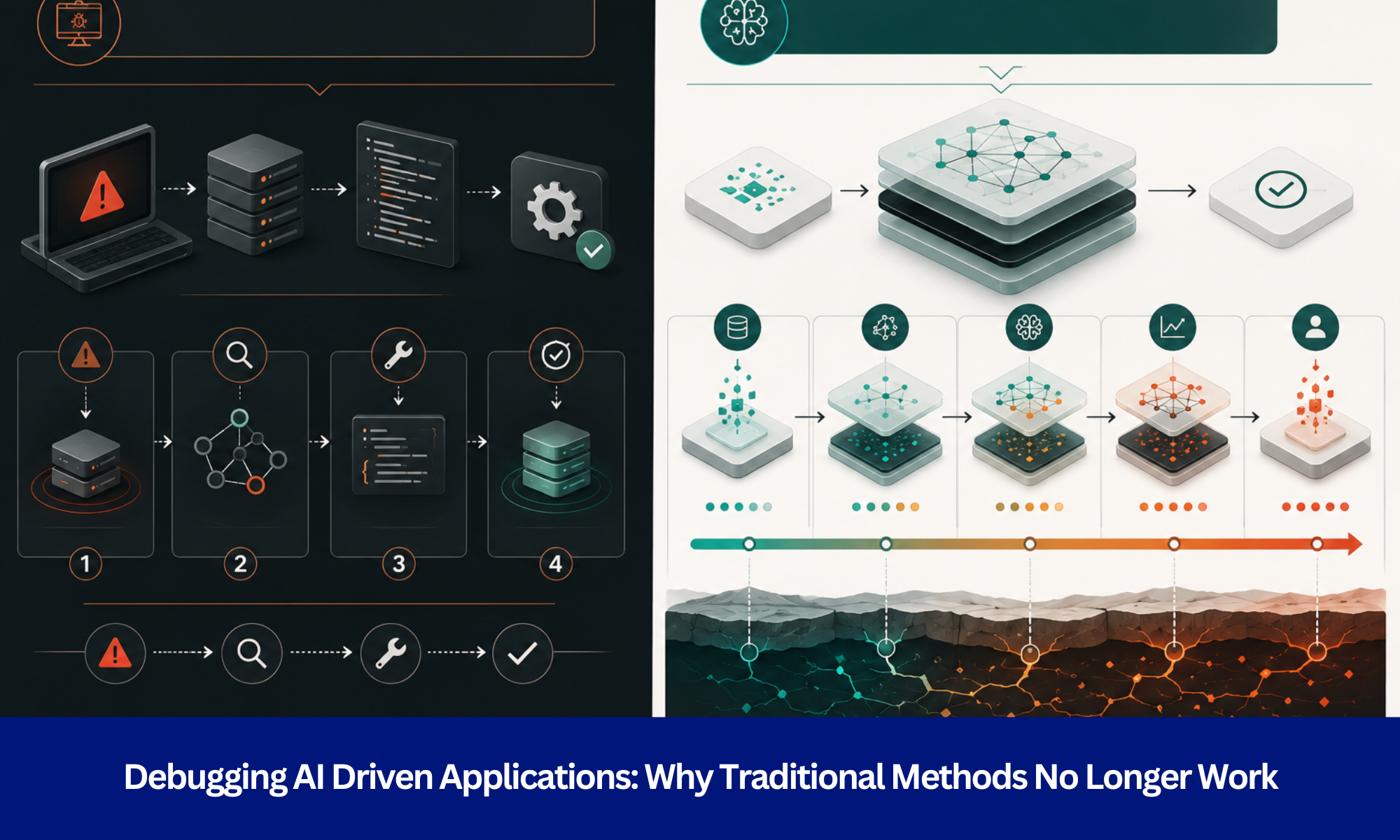

Observability and Debugging

As systems become more autonomous, understanding their behavior requires deeper visibility.

In traditional systems, examining inputs and outputs is often sufficient. In agentic systems, the outcome is shaped by a sequence of intermediate decisions.

A failure may originate from how a task was planned, how a tool responded, or how context was interpreted. Without visibility into these steps, identifying the root cause becomes difficult.

For this reason, observability is treated as a core capability. Systems that perform well in production typically include detailed execution traces, step-level logs, and the ability to reconstruct past runs.

This allows teams to move from guessing behavior to systematically analyzing it.

Tool Integration and System Boundaries

Agentic systems rely heavily on external tools. These tools introduce variability that is outside the control of the core system.

APIs may fail, return inconsistent data, or respond with delays. When such behavior is part of the execution loop, it directly affects system performance.

This makes integration design a central concern. Systems must be built with the expectation that tools will not always behave reliably.

Approaches that improve resilience include standardizing interfaces, handling failures explicitly, and introducing abstraction layers between the agent and external systems.

Over time, this results in a more stable foundation for execution.

Memory and Context Management

Memory enables agentic systems to maintain continuity across steps. It allows them to reference prior actions and build context over time.

However, as more information is stored, relevance becomes a concern. Outdated or unnecessary context can influence decisions in unintended ways.

This introduces a trade-off between retaining useful information and avoiding noise.

Effective systems treat memory as a managed component. They prioritize relevant context, remove outdated entries, and reset state when needed.

This ensures that memory supports decision-making rather than degrading it.

Security and Operational Risk

Agentic systems expand the scope of what AI can do. They can access systems, trigger workflows, and interact with external services.

This increased capability also increases exposure.

Inputs to the system may not always be trustworthy. In addition, actions taken by the system must be controlled to prevent unintended outcomes.

Security must therefore be implemented at multiple levels. Input validation, controlled permissions, and isolation of sensitive operations are common practices.

The system must be designed to operate within clearly defined boundaries.

Cost and Resource Efficiency

Agentic systems often require multiple iterations to complete a task. Each step contributes to computational cost.

In practice, cost is not fixed. It depends on how the system behaves during execution.

Without optimization, repeated steps or inefficient workflows can increase resource usage significantly.

Managing cost involves improving execution efficiency. Reducing unnecessary iterations, reusing intermediate results, and setting limits on execution are common approaches. These measures help ensure that systems remain scalable.

Evaluation and Measuring Performance

Evaluating agentic systems requires a broader perspective than traditional metrics provide. Since tasks involve multiple steps, success is defined by goal completion rather than a single output.

This makes evaluation more contextual. It includes not only whether the task was completed, but also how efficiently it was done.

Teams typically combine task-specific metrics with real-world feedback. This allows performance to be assessed in a way that reflects actual system behavior.

Scaling and Multi-Agent Coordination

As systems grow, multiple agents may be introduced to handle different tasks. This increases capability but also introduces coordination challenges.

Without clear structure, agents may overlap in responsibilities or interfere with each other. This can reduce efficiency and create unpredictable behavior.

To address this, systems are designed with defined roles, orchestration layers, and communication patterns.

At this stage, the system begins to resemble a distributed architecture, where coordination is as important as individual performance.

Conclusion

Agentic AI systems represent a shift toward more dynamic and capable forms of automation. They enable systems to plan, act, and adapt in ways that were not previously feasible.

At the same time, they introduce challenges that are rooted in system design rather than model performance. Addressing these challenges requires a focus on structure, visibility, and controlled execution.

Organizations that approach agentic AI with this mindset are better positioned to build systems that perform reliably in real-world environments.

The Shift That Matters

As organizations move from experimentation to production, the design of agentic systems becomes increasingly important.

Evermethod Inc works with teams to design and deploy agentic AI systems that are reliable, scalable, and aligned with real-world requirements.

For teams evaluating how to operationalize agentic AI, a structured and system-focused approach can significantly improve outcomes.

Get the latest!

Get actionable strategies to empower your business and market domination

.png?width=882&height=158&name=882x158%20(1).png)

.png/preview.png?t=1721195409615)

%2013.png)